What is it about?

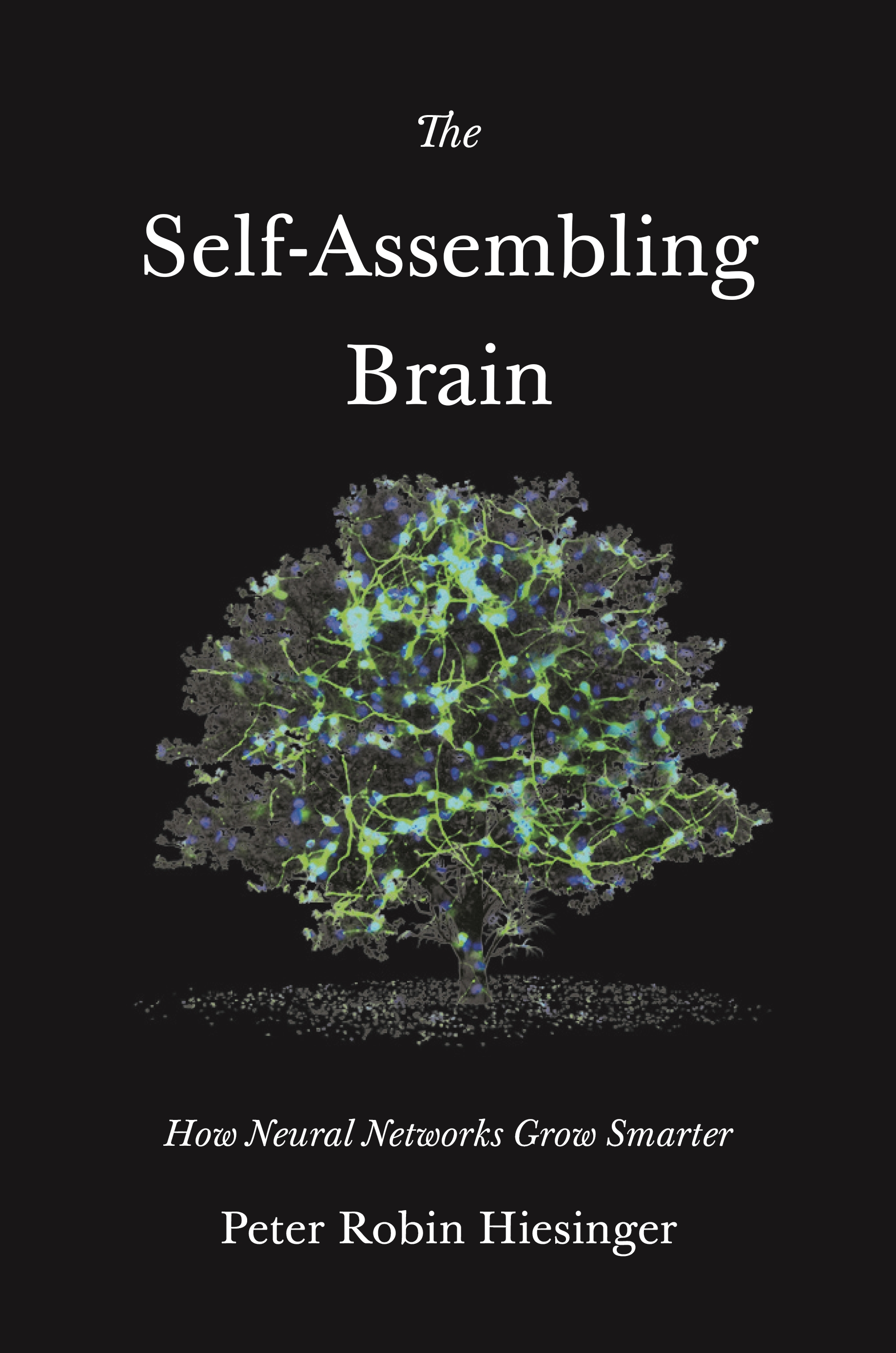

Neural networks are the basis of biological brains and artificial intelligence alike. The Self-Assembling Brain seeks an answer to the question: How does a neural network become a brain?

The smarter the network, the more information we need to describe it. Where does the information come from and how did it get in there? First, information enters into the initial making, that is the connectivity, of a neural network. This takes some time and energy. Next, the network learns. This takes time and energy, too. What is the relative importance of these two sources of information? This is where biological and artificial systems differ dramatically: one grows, the other has an ‘on’ switch. There is an ongoing debate about what either type of information can achieve, how they are represented in the network, how they work together, and when either or both are needed at all.

I call this the information problem.

Biologist like to pitch ‘genes’ against ‘learning’ – nature versus nurture. We know that the genes somehow encode many aspects of neural networks prior to learning. But what does ‘encode’ mean? The genes do not describe neural networks, they are the basis of an algorithmic growth process that results in neural networks capable of astonishing feats already prior to learning. What do I mean with ‘algorithmic growth’? Well, that’s what The Self-Assembling Brain is about.

AI engineers design artificial neural networks that need to learn. Machine learning encompasses supervised learning, unsupervised learning, and reinforcement learning. No genes. No developmental growth. The basis of AI applications are artificial neural networks that are designed and then switched on to learn. They can learn from big data or through self-learning. Artificial neural networks can accomplish little prior to learning, but master more and more astonishing feats once they learned enough. Can a data-fed or self-learned AI become a ‘human-level AI’ without genes and development? Well, that’s what The Self-Assembling Brain is about.

Is it for me?

I wrote The Self-Assembling Brain for anyone interested in the question how neural networks grow smarter – both biological and artificial. If you are interested in how the brain relates to efforts in AI, and why the brain has to grow to develop intelligence, this book is for you.

I collected feedback on the ideas and chapters of The Self-Assembling Brain from students, scientists inside and outside my lab, and interested friends with no connection to academic science. Some of the most valuable feedback came from this last group, and the book was written with them in mind.

What does it look like inside?

The Self-Assembling Brain tells a story. Two stories, really. First, there is the true story of the remarkable scientists that study how the brain comes to be, and those that try to make one from scratch. This story is told in a series of ten seminars, beginning with the shared history of both fields.

And then there is the story of the imaginary workshop where the seminars take place. In-between the ten seminars, four participants at the workshop engage in heated discussions. They are a neuroscientist, an AI researcher, a developmental geneticist, and a robotics engineer. Can they possibly agree? You can read their backstory here.