In his book ‘The Myth of Artificial Intelligence’ Erik Larson makes the argument that there is no known instance of any known type of intelligence producing something more intelligent than itself. Of course, past failure need not predict future failure. Unless there is a fundamental roadblock. So, let’s explore what can produce intelligence, shall we?

The futurist’s notion remains that a future (higher) intelligence will have the new ability to produce more intelligence rather suddenly. The idea is that we are on the right path, we just haven’t reached the threshold yet. At what point that fundamental switch will happen is currently unknown. Futurists propose a ‘singularity’ based on the idea of intelligent systems producing ever more intelligent systems. The proposed singularity is the point after which there will be an exponential (or over-exponential) increase of intelligence based on ever more intelligent systems producing ever more intelligent systems. The idea takes off if, and only if, the proposition is true that intelligent systems can produce more intelligent systems. This is a condition for the singularity, not the singularity itself. If the proposition is not true, then the singularity is a nonstarter. If the proposition is true, it is still not sufficient. Not only must a new type of future and higher intelligence be able to produce a more intelligent system, but each new more intelligent system must also have that elusive ability of producing ever more intelligent systems. The futurists’ argument that this will be so is based on our inability to refute anything futuristic that hasn’t happened yet and for which there is no template.

Alas, there is one template for producing intelligent systems, of course, it is just not based on intelligent systems. Evolution. Evolution has produced all intelligent systems we know of in the known universe. Evolution has been at it for billions of years in the generation of intelligent systems from butterflies to humans. None of these intelligent systems, neither butterflies nor humans, have thus far produced systems that are:

(1) more intelligent than themselves, and

(2) able to produce yet more intelligent systems.

(1) is a matter of some debate. Humans have developed pocket calculators that are much better at adding sums than humans, planes that fly longer distances than birds, and artificial intelligent systems that play chess better than any other intelligent system known to man. Since computer processing power grows exponentially, the argument goes, it is only a matter of time to reach the elusive threshold. But faster versions of motor scooters will never fly to the moon, no matter how exponential their increasing speed. The proposition requires the exponentially faster systems to produce fundamentally new things, not just faster things.

A butterfly can produce a version of itself with the ability to navigate a 3000 mile migration route, even though the butterfly that produced that intelligent system could not navigate the route itself. The evolutionary history of living systems is a history of re-programming and growing ever more intelligent systems based on the consumption of vast amounts of time and energy. The development of pocket calculators, airplanes and chess-playing AI systems also takes a lot of time and energy, albeit on a much smaller scale. As AI systems get more and more intelligent, so do their time- and energy-consuming developmental processes. Both feeding of big data as well as reinforcement learning are iterative learning processes that require ever more time and energy to achieve more intelligent systems. There is no indication of a shortcut. The hope lies in ever faster computers and better learning algorithms. It remains unclear how much time and energy it may take to develop what we call human intelligence. The uncertainty hinges on the question whether there is a shortcut to producing human intelligence. A shortcut, that is, to shorten to current record of 9 months in the womb and decades of growth.

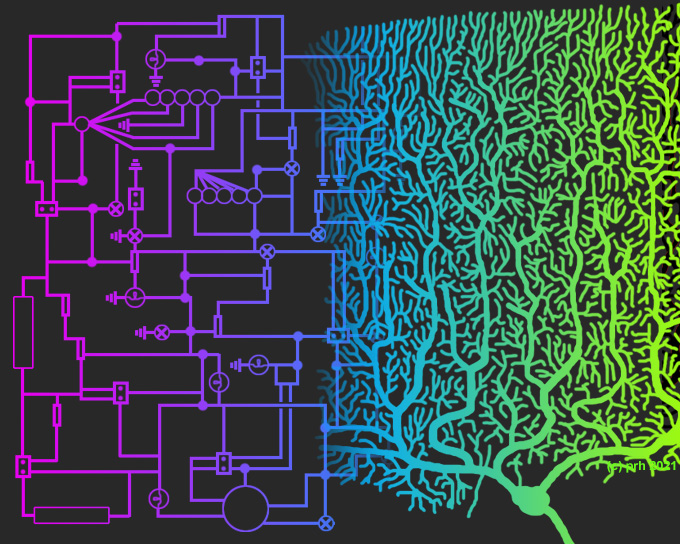

Evolution requires the genome – and the growth process it encodes – in all its glory to re-program, test, and select outcomes, generation after generation. This is an iterative and immensely time- and energy-consuming process. It can produce ever more intelligent systems at the end of a distribution, as it has done for millenia. In doing so, it has certainly tried out a lot of options for making the process less time- and energy-consuming. Evolution is pretty good at optimizing systems, including the production of different types of intelligence. How come evolutionary principles are not a focus of discussion in the AI community?

Well, this bring us to (2), see above, as a matter of debate. As many parents have experienced, humans are, like butterflies, able to produce more intelligent systems than themselves. It just takes 9 months in the womb and decades of growth. The human prefrontal cortex has not fully developed until the mid twenties. Ask any teenager. Improvements to this developmental process rely on further generations of trials and errors. The real question is simply: will there ever be a shortcut, an artificially intelligence version, that can do this faster, and ever faster. Or is there no shortcut to the growth and evolutionary programming of intelligent systems. We do not know the answer, but it is surely worth a discussion across disciplines. Welcome to The Self-Assembling Brain.